NUMA? What’s NUMA?

Having to deal with a Non-Uniform Memory Access (NUMA) machine is becoming more and more common. This is true no matter whether you are part of an engineering research center with access to one of the first Intel SCC-based machines, a virtual machine hosting provider with a bunch of dual 2376 AMD Opteron pieces of iron in your server farm, or even just a Xen developer using a dual socket E5620 Xeon based test-box (any reference to the recent experiences of the author of this post is purely accidental :-D). Just very quickly,  NUMA means the memory accessing times of a program running on a CPU depends on the relative distance between that CPU and that memory. In fact, most of the NUMA systems are built in such a way that each processor has its local memory, on which it can operate very fast. On the other hand, getting and storing data from and on remote memory (that is, memory local to some other processor) is quite more complex and slow. Therefore, while hardware engineers bump their heads against cache coherency protocols and routing strategies for the system BUSes to be put on such machines, the most urgent issue for us, OS and hypervisor developers, is the following: how can we couple scheduling and memory management so that most of the accesses for most of our tasks/VMs stay local?

NUMA and Xen

The Xen hypervisor already deals with NUMA in a number of ways. For example, each domain has its own “node affinity”, which is the set of NUMA nodes of the host from which memory for that domain is allocated (in equal parts). That becomes very important as soon as many domains start running memory-intensive workloads on a shared host. In fact, as soon as the majority of the memory accesses become remote, the degradation in performance is likely to be noticeable. An effective technique to deal with this architecture in a virtualization environment is virtual CPU (vCPU) pinning. I mean, if a domain can only run on a subset of the host’s physical CPUs (pCPUs), it is very easy to turn all its memory accesses into local ones, isn’t it? Actually, that is exactly what Xen does by default: at domain creation time, it constructs the domain’s node affinity basing on what nodes these pCPUs belongs to… Provided the domain specifies some pinning for its vCPUs in its config file, with something like the below (assuming CPUs #0 to #3 belongs to the same NUMA node):

…

vcpus = Â ‘4’

memory = Â ‘1024’

cpus = “0-3”

…

This is quite effective, to the point that the (old) xend-based toolstack, if there is no vCPU pinning in a domain config file, tries to figure out on its own where to put it, and pin its vCPUs there! That could be seen as something reasonable to do, and surely brings performance benefits. However, vCPU pinning is quite unflexible, as the VM in question won’t for any reason be allowed to run outside from that set of pCPUs. This means, no matter whether it is the most (artificially-)intelligent of the toolstacks or the most careful of the sysadmins setting it up, you are in constant danger of under/bad utilizing your hardware resources you payed a lot of money for.

As of now, the new xl-based toolstack does not have anything like that.  Yes, there is the possibility of dealing with NUMA by partitioning the system using cpupools (available in the upcoming release of Xen, 4.2), as explained in Xen 4.2: cpupools. Again, this could be “The Right Answer” for many needs and occasions, but  has to to be carefully considered and manually setup by hand. What would be nice to have is a self-configuring solution automatically jumping into the game and maximizing the overall system performances.

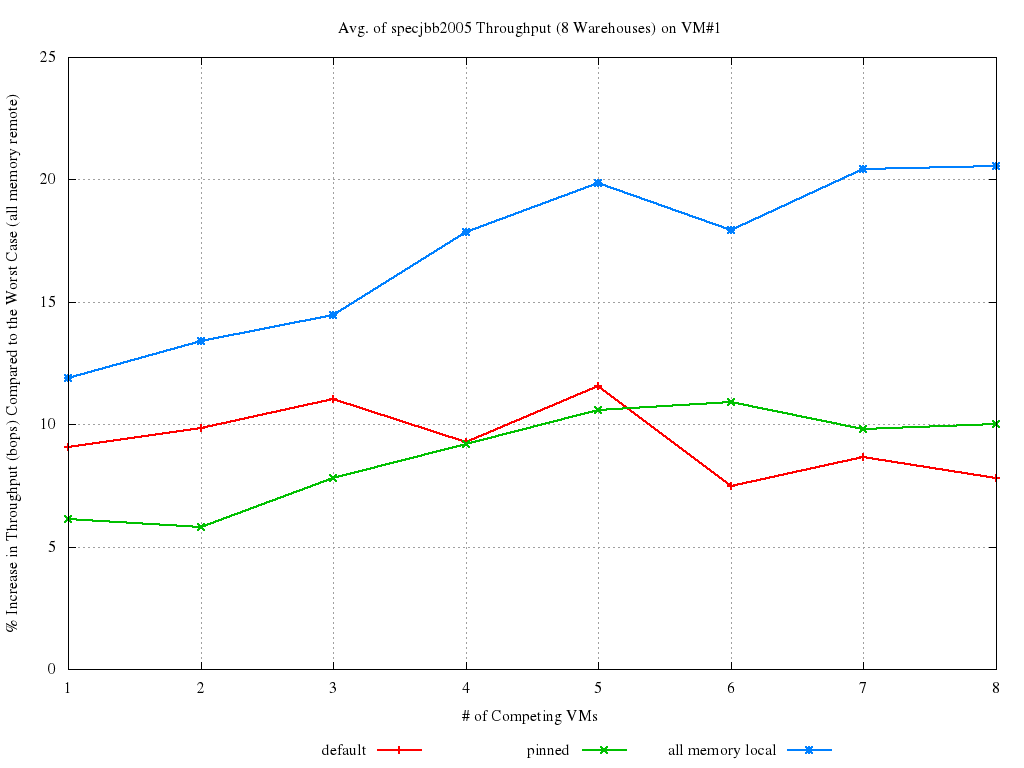

Some numbers or, even better, some graphs!

Let’s give it a break to all this talking and try looking at what we are discussing more concretely. So question is: can we run some memory-intensive benchmark within a bunch of competing VMs (on a NUMA host) and see if this local/remote memory accessing thing really matters? Well, actually, yes we can. Unfortunately, no matter how bad you are hoping the benchmarker to be the research lab guy with login credentials for an SCC platform… He actually is the poor Xen developer with the 2 Socket Xeon. Plots showing what happened on a 2 nodes, 16 cores system is what we can show then. For now.

The elected benchmark was SpecJBB2005, under the assumption that it will generate quite a bit of stress on the memory subsystem, which turned out to be the case. Host was the 16 CPUs, 2-NUMA nodes, Xeon based system with 12GB RAM (2GB of which reserved for Dom0). Linux kernel for dom0 was 3.2, Xen was xen-unstable. Guests had 4 vCPUs and 1GB of RAM each. Numbers come from running the benchmark on an increasing (1 to 8 ) number of Xen PV-guests at the same time, and repeating each run 5 times for each of the VMs configurations below:

- default is the defaul Xen and xl behaviour without any vCPU pinning at all;

- pinned means VM#1 was pinned on NODE#0 after being created. This implies its memory was striped on both the nodes, but it can only run on the fist one;

- all memory local (best case) means VM#1 was created on NODE#0 and kept there. That implies all its memory accesses are local, and we thus call it the the best case;

- all memory remote (worst case) means VM#1 was created on NODE#0 and then moved (by explicitly pinning its sCPUs) on NODE#1. That implies all its memory accesses are remote, and we thus call it the worst case.

In all the experiments, it is only VM#1 that was pinned/moved. All the other VMs have their memory “striped” between the two nodes and are free to run everywhere. The final score achieved by SpecJBB on VM#1 is reported below. As SpecJBB output is in terms of “business transactions per second (bops)”, higher values correspond to better results.

First of all, notice how small the standard deviation is for all the runs: this just confirms SpecJBB is a good benchmark for our purposes. The most interesting lines to look at and compare are the red and the blue ones. Evidence is there that things can improve quite a bit, even on such a small box, especially in presence of heavy load (6 to 8 VMs). Let’s also look at the percent increase in performance of each run with respect to the worst case (all memory remote):

This second graph makes even more clear how NUMA placement and scheduling is accountable for a ~10% to 20% (depending on the load) impact on performance. The default Xen behavior is certainly not as bad as it could be: default almost always manage in getting ~10% better performance than the worst case. Also, although pinning can help in keeping performance consistent, it doesn’t always yield an improvement (and when it does, it is only by few percent points). There is a ~10% performance increase to gain (and even more, in heavy loaded cases), if we manage in getting default to be close enough to all memory local, and that should be the way to go!

The full set of results, with plots about all the statistical properties of the data can be found here.

Are we on the case then?

We sure are! Preliminary patches have been posted to the xen-devel mailing list, and the results of some (preliminary as well, of course) benchmarks are available on this Wiki article. A new blog post expanding on their aims, features, and performances will follow. In the meanwhile, should you feel like wanting to help with testing, benchmarking and fixing things, please, jump in!

And the moral of the story is…

Yes, my dear NUMA pieces of hardware out there, the Xen.org community is staring at you right in the eyes, and we will get the best out of you, no matter how hard it will be… “Lower your shields and surrender. Resistance is futile“!